TL; DR

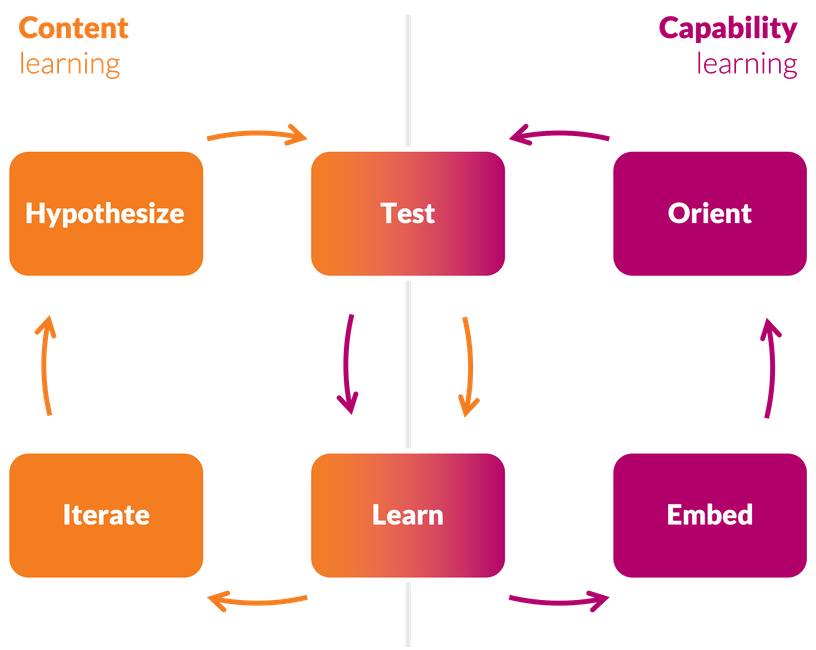

Learning about user pain points and the solutions and businesses that might address those problems is good.

But it leaves your team treading water.

You will only meaningfully improve your capabilities if you make Capability Learning just as much of a priority.

So:

🔭 "Orient" to top team capability gaps.

🧑🔬 Find natural ways to "test" fixes in daily work.

🧑🎓 "Learn" lessons and check whether it mattered.

🧱 "Embed" what worked in your regular approach.

But watch out. This is harder in reality than in theory!